BOINC

BOINC (Berkeley Open Infrastructure for Network Computing) is a Middleware for public resource computing projects developed at the Space Sciences Laboratory at the University of California, Berkeley. It is released under the GNU Lesser Public License, which allows the usage even with proprietary software.

Goals

The intention behind the developement was to provide the knowledge of public resource computing from the SETI project to the public. It should encourage the creation of and the participation in projects. So the main focus lies on simple setup and less maintenance combinated with "incentives" für the participants.

Current projects running on BOINC

- Climateprediction.net: study climate change

- Einstein@home: search for gravitational signals

- LHC@home: CERN LHC particle accelerator

- Predictor@home: protein-related diseases

- Rosetta@home: same as above

- SETI@home: radio evidence of extraterrestrial life

- Cell Computing biomedical research

Implementation

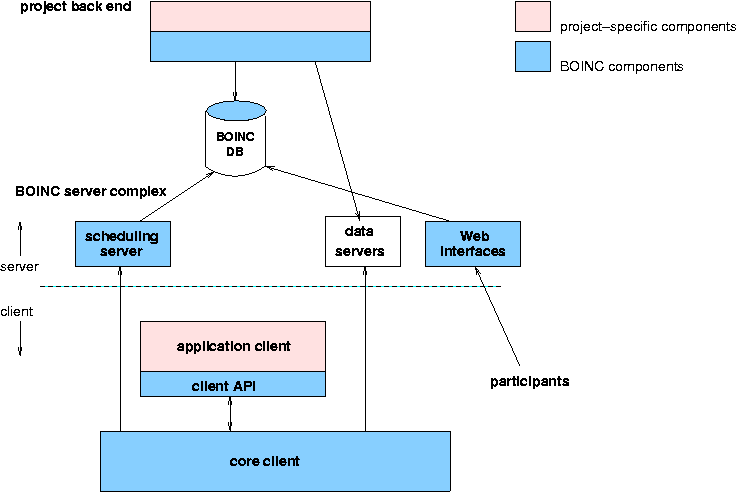

Each project is identified with a single master URL, which describes the project and provides registration, client download and the URL to scheduling servers.

- Project back end supplies applications and work units, and handles the computational results

- Input and output files are distributed by data servers

- One or more scheduling server communicate with participant hosts

- A relational database stores information about work, results, and participants

- Web interfaces for participants and developers provides the master URL, current project results and other information

- Core client can run as a screensaver, an application, a windows service or an UNIX command-line program

- Client API provides information about utilization of resources and an interface for result visualisation

- Apllication client does the work on the work units

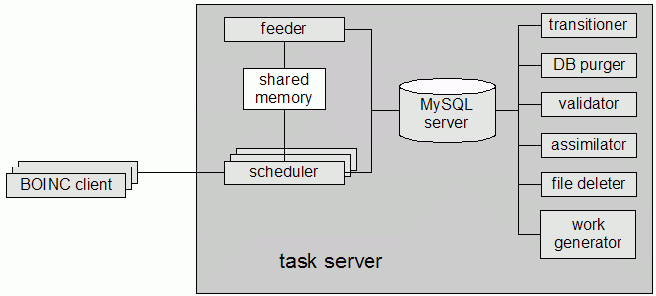

Taskserver (or sceduling server) en detail:

- The work generator creates new jobs and their input files

- The scheduler handles requests from BOINC clients

- The feeder caches jobs which are not yet transmitted

- The transitioner examines jobs for which a state change has occurred and handles this change

- The database purger removes jobs and instance database entries that are no longer needed

- The validator compares the instances of a work unit

- The assimilator handels tasks which are done

- The file deleter deletes input and output files that are no longer needed

Computation

A work unit comprises all input needed for computation, incl. files, arguments and parameters (like required computing time/RAM/storage or a deadline).

A result is the output of computation with files and a reference to a work unit.

All files have unique names in a project and contain attributes, like a list of URLs for down-/uploading and perhaps a compression flag.

BOINC supports redundant computing to prevent from malfunctioning computers/malicious participants. The comparison is done application-specific, because even results which differ slightly could be the same. Homogeneous redundancy is also supported, which means every instance of a work unit is computed in an equal environment (same CPU and OS).

Accounting

"credit" is a weighted combination of computation, storage and network transfer. It is used as a comparison between the participants. It stimulates a competition beween them, an so BOINC supports export of XML files for "leaderboards". Although each project has its own userbase, BOINC provides a cross-project identification over same E-Mail addresses.

Other features

To intensify the accounting competition it is possible to create teams. BOINS also supports user profiles and message boards, which are a quite inexpensive way for customer support.

Anonymous platform mechanism provides a way to compile and optimize the application client for yourself if the project is open source.

The local scheduling policy of the core client is capable to use the client ressources efficiently. It keeps deadlines and the user settings on the ressource distribution over the projects.

Outlook

BOINC is still in development. The focus lies on efficient data replication for large workunits, garbage collection in scarce-resource situations and the usage of alternate disks.

The last big news was the following, so this pocess would last a long time too. 10/10/05 Data migration of SETI@home classic completed after 3 years, merging in progress

back to SETI_at_home

--Butzek 14:10, 18 Nov 2005 (CET)